Arkadium: a neuro-symbolic agent anchored to the Meta-Globàlium for structural verification of human judgment in artificial intelligence systems

Opengea SCCL — Barcelona, Catalonia

Abstract

Large Language Models (LLMs) exhibit four structural deficits that scale alone cannot resolve: correctness, transparency, generalization, and efficiency. Recent reasoning models — OpenAI's o-series, DeepSeek-R1, Gemini Thinking, Anthropic Opus and Sonnet — show progress in domains with formal verifiers (mathematics, code, logic) but collapse in human domains where no ground truth exists: ethics, politics, social judgment, public deliberation. This paper presents a concrete technical proposal to overcome this verifier gap.

The technical contribution has two complementary faces: (a) a dispersion completeness function 𝓗(r) ∈ [0, 1] that quantifies, over an explicit relational ontological metastructure, the extent to which a response r integrates the dialectical poles of the model — an epistemic property we call globalistic truth, a non-monological conception of truth that recognizes the irreducible plurality of its access modes (objective and subjective, theoretical and practical, phenomenal and noumenal, plasmatic and mundane), in line with the hermeneutic and communicative tradition (Gadamer, Habermas, Heidegger); and (b) an external canonical basis of ontological directions — the Meta-Globàlium — onto which any model can be projected, compared, and audited in a portable, model-agnostic manner, providing a genuinely external top-down interpretability layer — directions not discovered post hoc from inside the model, but derived from a reflective synthesis of human judgment. This second face is structurally complementary to mechanistic interpretability agendas (Anthropic's sparse autoencoders) and representation engineering (Zou et al. 2023): if the former discovers what is inside the model, ours offers an external, shared, revisable ontological vocabulary to name it. The methodological move is from predictive models — which extrapolate patterns from the training corpus — to judgment models — which evaluate their own output against an explicit relational ontology. Arkadium is the first functional materialization of this proposal: a conversational agent, RAG framework, and 3D Metamodeler that compute 𝓗(r) at runtime over each response. We argue that this path — open and sovereign — is structurally necessary to overcome the current ceiling of brute-force scaling combined with textual constitutions. Demonstration available at arkadium.ai.

Philosophical genealogy. This work is inspired by the Globàlium model (Xirinacs 1997, doctoral thesis defended at the University of Barcelona), inherited from the Catalan tradition of integrative thought (Llull → Sibiuda → Pujols → Xirinacs): a 4D hypersphere with 8 primary categories, 26 in the minor model, 80 in the major model, organized along four dialectical dimensions. The Globàlium is philosophical heritage, developed by Xirinacs and cited here as inspiration; it is not part of our work. (i) The Meta-Globàlium (Berenguer / Opengea, 2024–2026) is the formal and computational extension of the Globàlium, from which new axioms and principles are derived and over which the function 𝓗 is defined. (ii) Arkadium (Berenguer / Opengea, 2024–2026) is the agent operating anchored to the Meta-Globàlium as substrate — the verifiable technical artifact that materializes the proposal and tests it. In the Xirinacsian lineage, from this dialectical fullness of truth derives, as normative inheritance, the notion of the Good as harmony between parts — the Good derives from this dialectical fullness, it does not substitute it.

We argue that the necessary movement is from predictive models — which extrapolate patterns from the training corpus — to judgment models — which evaluate the dispersion completeness of their own output against an explicit relational ontology. Arkadium is the first functional materialization of this movement. Our orientation is, moreover, anthropologically explicit: an AI that teaches you to think, not an AI that thinks for you. We argue that technology must equip humans with culture — understood as shared and revisable software — that makes them more self-sufficient, not more dependent; and that a shared ontological framework between humans and artificial agents, far from being an academic luxury, is the structural condition for this relationship to be one of emancipation rather than delegation.

Keywords: AI alignment, neuro-symbolic AI, judgment models, structural verification, shared ontology, AGI, fractal recursion, non-monological truth verification, globalistic truth, dispersion completeness, Process Reward Model, representation engineering, ontological hyperspace, retrieval-augmented generation, Globàlium, Meta-Globàlium, Arkadium, dialectical reasoning, scalable oversight, computational wisdom, top-down interpretability, model-agnostic audit, portable interpretability, canonical direction basis, AI auditability.

Table of contents

1. Meta-Globàlium and Arkadium: two levels of our work

The proposal of this manifest is articulated in two levels of our work — the Meta-Globàlium and Arkadium — anchored in a prior philosophical source of inspiration, the Globàlium of Lluís M. Xirinacs. This source must be distinguished from what is properly our work: the Globàlium is Catalan philosophical heritage developed by Xirinacs and cited here as inspiration; the Meta-Globàlium and Arkadium are formal and computational evolutions developed by Jordi Berenguer and Opengea (2024–2026).

Source of inspiration — The Globàlium (Xirinacs 1997). A global philosophical model of reality, inscribed in the Catalan tradition of integrative thought — Ramon Llull and his Ars Magna (13th c.), Ramon Sibiuda and the Theologia Naturalis (15th c.), Francesc Pujols and the Concepte general de la ciència catalana (1918), through Eugeni d'Ors —, it is the contemporary culmination of a persistent ambition: to offer an exhaustive and revisable cartography of human knowledge capable of accommodating "everything, from God to an espadrille" (Xirinacs 1997). Geometrically, it is a four-dimensional hypersphere; structurally, it contains 8 primary categories, 26 in the minor model and 80 in the major model, articulated on four dialectical dimensions (TEO ↔ PRA, SUB ↔ OBJ, NOU ↔ FEN, PLA ↔ MON) and four principles (identity, alterity, holicity, universality). It is philosophical heritage — a map, not the territory — and a source from which we depart but which does not constitute our work properly speaking.

Xirinacs already anticipated, in 1997, the application of the Globàlium to artificial intelligence systems. In the same doctoral thesis he formulated this intuition with literal clarity. Describing one of the exercises presented at the end of the work, he writes:

«[a small book,] presented as an annex, made "by machine" by the model itself, as a prelude to what should be asked of a true artificial intelligence»

This is not a retrospective metaphor: it is an explicit declaration of computational intent, formulated when large language models did not yet exist. The original intuition is validated now, decades later, when contemporary AI systems reveal exactly the architectural needs that his model already offered: explicit and computable topology, systematic dialecticity, humanly inspectable granularity (Miller 7±2), scalable fractal recursivity, and externality with respect to natural language. The Meta-Globàlium and Arkadium are the technical materialization of the Xirinacsian prelude — the encounter between a philosophical vision formulated in 1997 as documented anticipation, and a computational capacity that can now finally host it fully. What Xirinacs called "true artificial intelligence" is precisely what this proposal claims: not an AI that extrapolates patterns, but an AI structurally anchored to a global model of human knowledge.

Three limitations acknowledged by Xirinacs himself. The Globàlium is, in its author's words, a «first well-defined and grounded formalization of the intuition of globality» (Xirinacs 1997, §22), explicitly left open to further development in three respects that the Meta-Globàlium addresses. (i) Discrete resolution. The 80 categories condense into single nodes affine concepts with different shades of meaning, and the model does not resolve their internal placement; Xirinacs himself admits this and points to «a fractal deepening, dependent on the magnitude "resolution" or "scale"» (1997, §22), a path the Meta-Globàlium takes with the recursively subdivisible fractal architecture of 6,400 metacategories (80 thematised expressions × 80 categories). (ii) Absence of an operative method. The Globàlium is presented as a visual instrument; Xirinacs makes it explicit that «we leave to others the development of "Modelology"» (1997, §187) and warns that its use belongs not to the machine but «to art» (§490.012); the Meta-Globàlium responds with the formalised Global Method — auditable 8-station inference cycle instantiated in three basic turns along the great circles (Application Turn ANA → SIN → AMO → EXP, Orientation Turn, Knowledge Turn), with the Solve-Coagula meta-operation as directional iterator along the FEN ↔ NOU axis. (iii) Absence of axiomatic foundation. The plasmatic categories — regenerative core and seed of the rest of the model — are defined in the Globàlium by philosophical intuition, without a system of axioms capturing their primary essence and enabling their principles to be derived; the Meta-Globàlium contributes an axiomatic formalisation with six principles (Regenerativity, Interdependence, Plurisingularity, Totality, Mutability, Integrity) derived from eight fundamental mathematical operations (equality/polarity, distinction/correspondence, similarity/divergence, convergence/difference) over the neutral cube, enabling more rigorous and global levels of generalisation.

(i) The Meta-Globàlium (Berenguer / Opengea, 2024–2026) is the formal and computational extension of the Globàlium: it gives the original more resolution and makes it operative on a computational substrate. Six main contributions: (a) an axiomatic formalization with six derived principles (Regenerativity, Interdependence, Plurisingularity, Totality, Mutability, Integrity) and eight maximal dialectics derived from the neutral cube — fourteen structural elements that cover the geometric relations of the model; (b) a Cartesian mapping of the 80 distinct categories and a canonical whitelist of codes (ANA, SIN, AMO, EXP, BEL, COS, IDE…) for unambiguous anchoring in embeddings and retrieval; (c) a two-layer fractal architecture that scales up to 6400 metacategories — Layer P (universal principles: the geometric and dialectical grammar) × Layer T (80 thematic expressions: Subject, Disciplines, Virtues, Pedagogy, Ideology, Mathematics, Philosophy, Linguistics, Archetypes, Health, Physics…), each a crystallization of the same elements over a central theme, with a specific semantic reading per position; (d) the canonical inscription of the fourth dimension as tempeternity (portmanteau of time + eternity), transversal radial axis PLA ↔ MON that gives each pole its three projections PLA/NEU/MON; (e) the Global Method as auditable inference architecture, instantiated in three basic voltes (loops) over the great circles of the 8 cardinals — each with its own four-operation cycle — and the Solve-Coagula meta-operation that iterates directionally FEN → NOU; and (f) a dispersion completeness function over the relational ontology, which quantifies the extent to which a response integrates the dialectical poles of the model. This completeness is what we call globalistic truth — a non-monological conception of truth that recognizes the plurality of access modes (OBJ+SUB, TEO+PRA, FEN+NOU, PLA+MON). In the Xirinacsian lineage, from this dialectical fullness derives, as normative inheritance, the notion of the Good as harmony between parts: prior structural condition — the dialectical completeness of the response — that the verifier does not usurp.

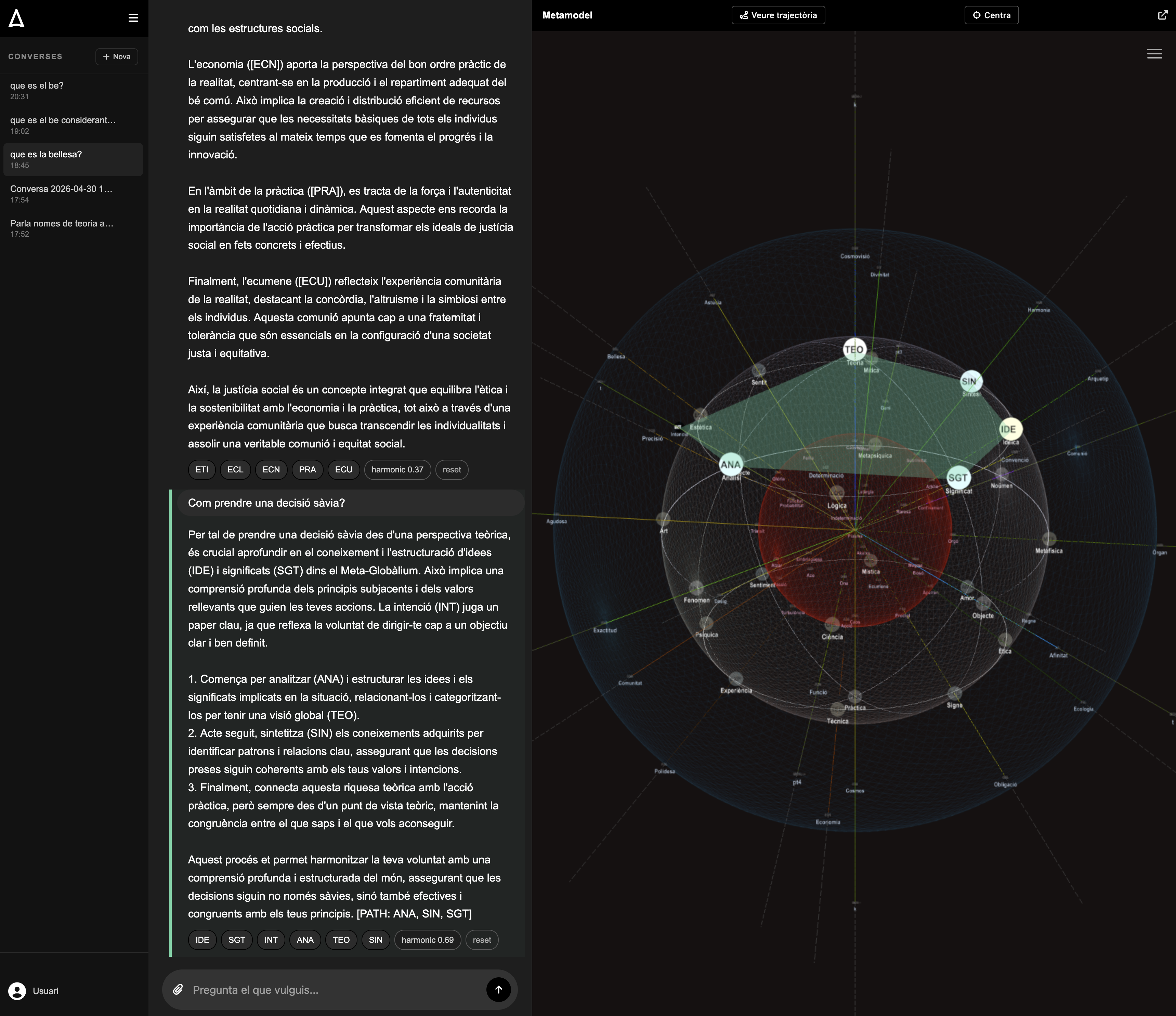

(ii) Arkadium (Berenguer / Opengea, 2024–2026) is the agent and ecosystem operating anchored to the Meta-Globàlium. It comprises a public web application (arkadium.ai), a RAG framework with two vector knowledge bases (public KB-A over the categories, per-user private KB-B with multi-tenant separation), a runtime structural verifier that computes the dispersion completeness function 𝓗(r) — operationalization of globalistic truth — on each response, a self-correction re-prompt loop, and a 3D Metamodeler that dynamically illuminates the cited categories. Arkadium is the verifiable technical artifact that materializes the proposal and tests it under real conditions.

The natural metaphor illuminates the relationship: the Globàlium is the original philosophical map that inspires us; the Meta-Globàlium is the computable map and method that we derive from it; Arkadium is the vehicle that navigates it. The two levels of our work presuppose and cite the source, but do not substitute it. The rest of the manifest develops the technical justification — why this type of substrate is needed, how its components are articulated, and why Arkadium constitutes the first functional materialization submitted to public inspection.

2. Introduction: the verifier problem

In 2023, in the notebook Saviesa Artificial (Berenguer 2023), we argued that the four central deficits of AI systems — correctness, transparency, generalization, and efficiency — would only be solved by recovering the virtues of symbolic AI without abandoning the power of neural networks. Three years later, reasoning models based on reinforcement learning over chains of thought (DeepSeek-AI 2025; OpenAI 2024) have shown notable results only in domains with objective verifiers (mathematics, code, logic). Apple Machine Learning Research (Shojaee et al. 2025) has documented how these models collapse when complexity exceeds a threshold and how reasoning effort measured in tokens paradoxically decreases with the problem.

Outside the formal domains — ethical judgment, political deliberation, arbitration of interests — there is no objective loss function. Process Reward Models (PRMs) built with human feedback fall apart through reward hacking. Constitutional AI (Bai et al. 2022) and Deliberative Alignment encode principles as textual lists, but these constitutions are interpreted freely by the model itself without an external structural substrate to anchor them.

What we propose here is that the verifier problem for human domains is, right now, the central problem of AI alignment, and that it has a concrete technical path of solution: replacing the textual constitution with a relational ontological metastructure over which to mathematically define a dispersion completeness function. This metastructure is the Meta-Globàlium described in §1; the agent that uses it as operative substrate is Arkadium. What the verifier measures is, in operative terms, the extent to which the response integrates the dialectical poles of the model — an epistemic property we call globalistic truth (a non-monological truth, plural in its access modes). In the Xirinacsian tradition, from this dialectical fullness derives the notion of the Good as harmony between parts; the verifier does not decide what the Good is — it collects the prior structural condition that this tradition ties to dialectical completeness.

It is useful to frame the diagnosis in more general terms. Current LLMs are essentially predictive models: given an input, they generate the most probable output according to the regularities of their training corpus. Reasoning models — the o-series, R1, Claude with extended thinking — add a layer of internal verification that works in domains with ground truth (mathematics, code). In human domains, however, this extension fails, and the solution is not more prediction: it is a change of nature — moving from predicting to judging. A judgment model does not ask "what would be statistically expected for a human to say here?", but rather "does the output I am generating integrate the dialectical poles necessary for a full understanding of the phenomenon?". The difference is architectural: judgment requires an external reference to the corpus — an explicit relational ontology on which the model can project its own output and measure its completeness. This is the conceptual transition that Arkadium technically materializes: from the predictive model conditioned by corpus frequency to the judgment model conditioned by the geometry of the Meta-Globàlium.

The Meta-Globàlium is precisely this structured vocabulary with explicit relations: 80 distinct categories, eight dialectical poles, derivable axioms, a Cartesian mapping that projects each concept onto a known position. It is, by construction, intelligible at once by humans (the axes are reflective — subject/object, theory/practice, phenomenon/noumenon, plasma/world) and by computational systems (the canonical codes are unambiguous anchoring for embeddings and regularization functions). Thus shared ontology ceases to be a conceptual desideratum and becomes an operative substrate for evaluation: every output of an AI model can be projected onto the same map that the human uses to situate it, and the conversation between the two parties unfolds on common coordinates rather than remaining suspended in divergent textual interpretations.

This manifest argues the proposal in ten additional sections. First, the nature of the verifier gap (§3). Then, the formal description of the Meta-Globàlium as structural verifier (§4). We discuss the relationship with top-down interpretability (§5), the field's convergence toward neuro-symbolic AI (§6), the arguments for efficiency and sovereignty (§7), the connection with scalable oversight and the field of computational wisdom (§8). We present the proof of concept — the Arkadium agent — deployed at arkadium.ai (§9), a comparative analysis with existing proposals (§11), and the implementation roadmap with its limitations (§12–13). We conclude with an invitation to use Arkadium and to extend it (§14).

2.b The architectural roots: why attention alone does not suffice

The verifier problem identified above is often framed as an emergent issue — a defect to be patched as systems scale up. We argue that it is not emergent: it is the direct consequence of an architectural decision taken in 2017.

When Vaswani et al. (2017) proposed Attention is all you need, the title was not rhetorical. The transformer architecture was a deliberate renunciation of every explicit structural prior that earlier neural architectures had carried — recurrence, locality, hierarchical composition, syntactic structure, ontological scaffolding. The design wager was that, given enough data and parameters, all required structure would emerge implicitly from associative attention alone, including any internal world model the system might need.

Eight years later, the wager has produced systems of unprecedented fluency and an architectural ceiling that the field is now publicly acknowledging. The world model that emerges from purely attentional training is implicit, distributed, and opaque — and therefore not auditable from outside the network. The industrial responses to its failure modes — RLHF, Constitutional AI (Bai et al. 2022), Deliberative Alignment (OpenAI 2024), sycophancy patches, reward modelling pipelines — operate as external textual layers over a substrate that has, by design, no internal structural ground for them to anchor to. This is the architectural root of the verifier problem of §2: not a defect of the corpus or of the optimisation procedure that more data or better RL can correct, but a direct consequence of forgoing structural priors.

This diagnosis is not unique to us. Bender & Koller (2020) argued that meaning cannot be grounded in pure form, however large the corpus. LeCun (2022) has explicitly proposed that progress beyond the current paradigm requires reinstating world models, hierarchical planning, and predictive structure that transformers do not have. The empirical scaling-law slowdown, documented by the major laboratories themselves, is consistent with this analysis. What none of these critiques articulate, however, is the further consequence we draw here: the same architectural absence that makes a system unverifiable also makes it incapable of fostering the user's own development. Without a shared structural map between agent and user, the conversation collapses into unstructured textual consumption — the user cannot probe specific zones of the agent's reasoning, cannot see what was omitted, cannot internalise a navigable structure. Over time, the agent risks becoming the user's cognitive substrate, homogenising rather than emancipating. The structural verification developed in §4 and the autonomy technology developed in §10 are therefore not two independent virtues of the proposal: they are co-implications of the same architectural decision — to anchor the system to an explicit, global, navigable structure rather than to expect such structure to emerge from attention alone.

3. The verifier problem for human domains

Current reasoning models — OpenAI's o-series, DeepSeek-R1 (DeepSeek-AI 2025), Claude with extended thinking, Gemini Deep Think — share an architectural pattern: they produce an intermediate chain of thought and are trained by reinforcement learning on this chain with a reward signal derived from a verifier. When the verifier is formal, results are notable: AlphaProof and AlphaGeometry 2 (DeepMind 2024) achieved IMO silver-medal level in 2024, gold with Gemini Deep Think in 2025.

The problem appears outside the formal domain. In applied ethics, political arbitration, or public deliberation, there is no objective loss function. PRMs with human annotations have two problems: (i) instability from reward hacking and (ii) prohibitive annotation costs. Apple ML Research (Shojaee et al. 2025) has demonstrated a counterintuitive scaling limit: reasoning effort in tokens increases with complexity up to a point and then decreases despite sufficient budget — symptom of a reasoning that cannot be validated at runtime against an external structure.

Constitutional AI (Bai et al. 2022) and derived proposals — Deliberative Alignment (OpenAI 2024) and variants — mitigate the problem by introducing a textual constitution: a list of principles that the model uses to self-critique and revise its own outputs, eventually with feedback generated by the model itself (RLAIF). This approach is an advance over classical RLHF, but maintains the structural problem: the constitution is text interpreted freely by the model. There is no guarantee that the 16, 75, or 200 textual principles of a constitution operate as a discriminating structure; on the contrary, Turpin et al. (2023) demonstrated that chain-of-thought explanations can systematically diverge from the model's actual internal computation — a 36% accuracy drop on BIG-Bench Hard when unmentioned biases are introduced.

What structural verification [an algorithmic mechanism that validates a model's outputs against an explicit normative function defined over a known ontology] offers is to replace textual interpretation with a measurable computation: instead of asking the model to interpret what "being balanced" means, the dispersion of the response over a canonical set of axes is mathematically defined and directly regularized. The question is which ontology is suitable for this. Classical knowledge graphs (Wikidata, ConceptNet, Cyc) do not offer a normative function: they are descriptive structures. Textual constitutions do not offer structure: they are statements. A metastructure is needed that combines the two: formal structure and explicit normative function. This is what we postulate the Meta-Globàlium offers — and it is the substrate on which Arkadium operates.

Why this substitution is structurally neutral. The central point — often under-articulated in alignment discussions — is that the primitives of the structural verifier are not concepts with axiological weight ("freedom", "justice", "authority", "virtue"), but polar dialectical axes: pairs of poles in tension. A textual constitution gives lists of values, and deciding which values go on the list and with what hierarchy is a culturally marked operation — the principles of a constitution written in California in 2022 are not those of one drafted in Brussels in 2026, nor those of one formulated from a Confucian, Ubuntu, or Buddhist tradition. The structural verifier does not decide which values are good; it only posits that no relevant dialectical axis may collapse to a single pole. The difference is the same as that which separates a grammar from a dictionary: the former is a formal structure that is a candidate for universality, the latter is culturally situated content. The Meta-Globàlium offers a grammar of full reasoning, not a dictionary of correct answers — and herein lies its neutrality.

The paradigmatic case is the classical formula of the Good as equilibrium between the freedom of individuals and the freedom of others (Kant, universal principle of right) — explicit instantiation of the verifier's dynamics on the SUB-OBJ axis applied to the ethico-political domain. Neither absolutisation of the individual (collapse toward SUB) nor dissolution into the collective (collapse toward OBJ): the Good is the geometric condition that neither pole collapses onto the other. The same dynamic projects onto TEO-PRA (coherence between principles and effective action), FEN-NOU (integration of immediate experience and mediated interpretation), PLA-MON (articulation between the stable and the changing). That the formula resonates with the Kantian tradition, with Aristotle (mesotes, virtue as mean), with classical dialectical thought, with Ciceronian prudence, with Thomistic via media, and with Zen Buddhism is not accidental: these are traditions that have independently discovered that the axes of human judgment are reflective and that the fullness of an answer requires not collapsing them. The novelty of the Meta-Globàlium is not to invent this intuition — it is to make it computationally operative through an explicit geometry.

This dissolves the relativism objection. That the verifier does not fix a specific moral content does not entail that all is equal: there are objectively better answers — those that keep the relevant axes alive and in tension — and worse ones — those that collapse to a single pole through simplification, ideology, or reductionism. The criterion of better is structural, derivable, public, and auditable; it is not decided by a list, it is observed over the geometry. The cultural neutrality of the verifier is therefore paid for without recourse to relativism: the structure is a candidate universal, the contents are plural, and the fullness of an answer consists in articulating the latter without collapsing the former. The is/ought barrier is discussed in more detail in §4.3.

Five dialectical orders. To frame technically why a geometric substrate is needed — and not a textual list of principles — we adopt the pedagogical classification of the canonical Globàlium manual (Berenguer 2024). Human thinking can be ordered into five geometric orders by the dimensionality of its representation: D0 (point) = dogmatism, absolute and indisputable truth; D1 (line) = linear thinking, direct confrontation; D2 (plane) = superficial feedback — "like an Excel sheet", the granularity of most current analytic models; D3 (sphere) = spatial perspective with depth (Globàlium's minor model); D4 (hypersphere) = global perspective with temporal and atemporal articulation (major model). The chain of thought of a contemporary LLM operates, at best, at D2: a linear trace with local feedback over the token space. The Meta-Globàlium proposal is reasoning at D3-D4: a spherical/hyperspherical geometry that makes structurally possible a dialectic that cannot be carried out within linear or planar reasoning. The dispersion completeness function 𝓗 defined over the ontology (§4.1) is precisely the mathematical expression that this geometry enables — and that textual lists cannot express.

3.bis Robustness to reward hacking as an evolutionary line

Why the verifier metric has generations, not a definitive version.

Any computable metric of a humanly rich quality — wisdom, dialectical depth, integration — will be gameable. The question is not whether it will, but what institutional structure assumes this condition as the design's starting point. Our answer is that robustness to reward hacking is not a static property of a metric but an evolutionary line: each generation anticipates known failure modes, defines new probes for them, and leaves a public trace of the cycle. We have documented two such cycles, and it is this dynamic — not a definitive version of the verifier — that we consider the methodological contribution.

First Goodhart (𝓗 v1, coverage + entropy). The first version of the structural verifier measured dispersion over the 8 quadrants with coverage and entropy. A response with 8 cardinal-titles and one neutral sentence under each saturated 𝓗 ≈ 1.0 without any internal relation between the poles. List with dialectical pose beat the verifier. The v2 response (deployed 2026-05-07) adds two positive components — axis_explicit (the first paragraph names a dialectical axis) and subordinating_synthesis (frames acting on each other through explicit subordinating verbs) — and rebalances weights so coverage+entropy fall jointly from 0.50 to 0.10. The new metric 𝓦 distinguishes list from dialectic: listed text ≈ 0.15, genuine dialectic ≈ 0.81–0.95.

Second Goodhart (𝓦 v2, structure without wisdom). Within 24 h of the v2 deployment the inverse failure appeared: a response optimized for high 𝓦 often read like a checklist with pose, with visible dialectical structure but lacking the integrative quality the bare-LLM exhibits by default. Optimization to the metric had shifted text toward the form of dialectic, evacuating its substance. The response to this second form of gaming is not a new patch within the metric — it is outside the metric itself: (i) a user-facing parameter — the escope, specified in §5.bis — that moves the response among three registers aligned with the radial PLA-MON pulse; (ii) a wisdom-polish second pass that separates doing the dialectical work from saying it well; (iii) a re-prompt loop with dual-criterion break (§9.5.b) that regulates 𝓦 as an independent control signal, not as an observational metric.

The architectural conclusion is compositional: the combination metric + system prompt + UI + structural loop covers collectively what no single component covers alone. The verifier's versions are public and dated; the cycle is reproducible. The expected robustness is not that of a finished one-dimensional verifier, but of an architecture with layers that audit each other and evolves as experience uncovers new failure modes. This is the difference between treating gameability as a hideable weakness and treating it as a generic property of the problem, integrated into the design.

4. The Meta-Globàlium as structural verifier

As introduced in §1, the Meta-Globàlium is the computational and formalized version of Xirinacs's Globàlium (1997), worked as a metastructure applicable to any domain of knowledge. Geometrically, it is a 4D hypersphere (Xirinacs distinguished a spherical minor model and a hyperspherical major model; the Meta-Globàlium takes the latter). It has three Cartesian axes and one radial axis:

- TEO ↔ PRA (theory ↔ practice): the vertical axis of discernment.

- SUB ↔ OBJ (subject ↔ object): the horizontal axis of tension.

- NOU ↔ FEN (noumenon ↔ phenomenon): the deep axis of appearance.

- PLA ↔ MON (plasma ↔ world): the radial axis of surroundings, from the regenerative center to the unfolded surface.

These eight poles are the 8 primary categories. Dialectical intersection generates the complete set of 80 distinct categories organized in three topological layers (plasmatic, neutral, and mundane) plus the PLA and MON anchorings. Each category has a canonical name (ANA for analysis, SIN for synthesis, AMO for love, EXP for experience, BEL for beauty, COS for cosmos, IDE for ideica, etc.) and occupies a precise position in the projected 3D space. A technical note: the canonical count is 80 distinct categories; the operative database, however, contains 90 entries — the 10 additional ones are topological disambiguations (polar vertices of MON and PLA/MON anchorings, mainly) needed for 3D positioning but not constituting new categories.

The canonical hierarchy of the Meta-Globàlium is, therefore, four levels: 8 → 26 → 80 → 6400. The first three levels come from Xirinacs's Globàlium (8 primary poles, 26 neutral concepts in the minor model, 80 distinct categories in the major model). The fourth level, the specific contribution of the Meta-Globàlium, scales the model to 6400 metacategories (80 × 80 dialectical combinations) with unambiguous definitions generated by structural composition — each metacategory is the canonical crossing of two categories from level 80, and its definition is derived from the composition of its two generators. This fourth level is what gives the Meta-Globàlium the fine resolution needed for unambiguous anchoring in embeddings and retrieval: going from 80 to 6400 anchoring points multiplies the semantic granularity of retrieval by 80 without losing structural traceability.

Concrete examples of metacategories. To make credible the claim of "unambiguous definitions generated by structural composition", four examples from level 6400:

- BEL × CIE = the aesthetic quality of empirical objectivity — the beauty proper to a well-measured fact; the aesthetic dimension of scientific discovery. (BEL: Quality, mundane; CIE: Partiality, neutral. Composition: the mundane category modulates how the neutral category manifests.)

- ANA × MIS = the analysis of the ineffable — the effort of introspection on experiences that words do not exhaust; mysticism as an object of rigorous study. (ANA: Divisibility; MIS: Ineffability. Internal tension between the two operations: this metacategory exists precisely as a point of tension, not as contradiction.)

- EXP × FEL = the experience of happiness — the practical implementation of subjective wellbeing; the transformation of FEL's plasmatic potentiality into sustained vital concretion. (EXP: Applicability, neutral; FEL: Discretionality, plasmatic.)

- HAR × DIV = equity as modality — justice as a specific form of distribution, not as arithmetic equality. (HAR: Equity, mundane; DIV: Modality, mundane. Composition of two mundanes ↔ fine refinement in the manifest zone.)

The general composition rule is: generator1 × generator2 = aspect of the first modulated by the dimension of the second. The unambiguous definition of a metacategory is mechanically derived from its pair of generators and from the topological type of each (plasmatic, neutral, or mundane), without semantic ambiguity. This is the property that enables anchoring 6400 canonical points to the embeddings without collisions.

A culturally anchored reading of the radial axis PLA ↔ MON is the Catalan binomial seny ↔ rauxa: rauxa as fecund impulse, sudden spark welling up from the regenerative center — that is, from PLA, the indeterminate germinal seed; seny as prudent measure, restful judgment anchored in unfolded reality — that is, in MON, the ordered surface of the manifest world. Every act of thought and every living decision oscillates between these two radial poles: neither only rauxa (impulse without world) nor only seny (world without impulse). The Meta-Globàlium captures this balance as structure — regenerativity (§4.4) is its axiomatic formulation — thus offering a formal reception to a cultural intuition encoded in the Catalan tradition.

4.1 Structural layer: dispersion completeness as formal property

The technically decisive move of the Meta-Globàlium with respect to the original Globàlium is the computational formalization of an epistemic/structural property over the ontology: dispersion completeness — a property of cognitive fullness over the question, measurable and external to the model, not yet a normative property.

Let C = {c1, …, c80} be the set of distinct categories of the Meta-Globàlium, and let Q = {q1, …, q8} be the set of eight primary quadrants (PLA, MON, SUB, OBJ, TEO, PRA, FEN, NOU). For a response r generated by an AI model, we define:

- fq(r) ∈ ℤ≥0 — the count of categories cited by r that project onto quadrant q (each category maps to a primary quadrant according to its dialectical position).

- n(r) = |{q ∈ Q : fq(r) > 0}| — the number of quadrants touched.

- pq(r) = fq(r) / Σq' fq'(r) — the empirical distribution.

- H(r) = −Σq pq(r) log pq(r) — the Shannon entropy of the distribution.

The dispersion completeness function is expressed, in the current implementation, as:

𝓗(r) = ½ · n(r) / |Q| + ½ · H(r) / log |Q| ∈ [0, 1]

The first term rewards coverage (having touched diverse quadrants); the second rewards uniformity of the distribution (not having concentrated). The function can be generalized, in future versions, to the totality of the 80 categories rather than only the 8 quadrants, with a significant increase in granularity.

Why 8 quadrants — and not 4, 16, or 64. The granularity of 8 is not arbitrary. Three considerations justify it. Structural: 8 = 2³ corresponds to the octants of a cube generated by three dialectical Cartesian axes (OBJ-SUB, TEO-PRA, NOU-FEN), plus the two extremes of a fourth radial axis (PLA-MON). It is the minimum dimensionality that covers the fundamental philosophical distinctions that the tradition identifies as irreducible poles of human knowledge. Cognitive: 8 falls within the range of human memory capacity (Miller 1956: 7±2 elements simultaneously tractable) — beyond that, coverage ceases to be inspectable for a human user. Operative: 8 yields a practical balance between granularity (too few quadrants collapse relevant distinctions) and statistical robustness (too many quadrants produce sparseness where few citations cannot reliably estimate 𝓗). Future versions using the 80 categories do not abandon the 8 quadrants — they refine them, projecting each category onto its primary quadrant while adding a dimension of fine granularity.

Two levels of formalization. The 𝓗 formula above operates at the mechanical level of the 8 primary quadrants — that is what the system computes at runtime. The six axiomatic principles formulated in §4.4 (and the eight essential mathematical operations — equality, polarity, distinction, correspondence, similarity, divergence, convergence, difference) operate at a different axiomatic-semantic level: they are the dialectical structures that the quadrants instantiate geometrically. A response with high 𝓗 has covered diverse quadrants; a response satisfying the axiomatic principles has paired unifying operations with divisive operations on each dialectical axis. The current version of the verifier implements the former (𝓗 over quadrants); extension to verification over paired operations is part of the implementation roadmap (§12).

What the 𝓗 function measures is, therefore, dispersion of citations over dialectical poles, not a moral decision: a property of structural non-omission over the plurality of access modes that the Meta-Globàlium encodes. The structural layer is what the system does algorithmically; its epistemic justification and normative resonance appear in §4.2 and §4.3.

It is important to emphasize what this function is not: it is not a substitute for factual judgment. A factually incorrect response is not redeemed by being dispersed. 𝓗 operates as a posterior structural regularizer over responses already factually valid — a second layer that penalizes reductionism. It is the operative piece that Arkadium's verifier computes at runtime over each response of the model.

4.2 Epistemic layer: globalistic truth

Of what is a response with high dispersion completeness evidence? This manifest proposes that this completeness is globalistic truth: a non-monological conception of truth that recognizes the irreducible plurality of its access modes. The dialectical completeness of a human phenomenon is not captured from a single pole; a truly complete response integrates OBJ + SUB + TEO + PRA + FEN + NOU + PLA + MON. A response that systematically covers a single pole is not partial but constitutively incomplete as representation of the phenomenon. Dispersion completeness quantifies exactly this: whether the geometry of access modes is represented.

The position is compatible with a recognizable philosophical tradition, without claiming to appropriate it. It resonates with Gadamer's hermeneutics (Truth and Method, 1960), for whom human truth unfolds in the fusion of horizons and is not reduced to objectifying method; with Habermas's theory of communicative action, where the validity claim is articulated in more than one dimension; and with the Heideggerian notion of aletheia as always-partially-concealed unconcealment. The Meta-Globàlium does not propose a new philosophical theory of truth: it computationally encodes an intuition already present in this non-monological tradition and gives it operative form. The difference is measurability: where Gadamer speaks of fusion of horizons in open hermeneutic terms, the Meta-Globàlium offers eight concrete quadrants on which the projection is computable. This is the new philosophical move: bringing a plural, non-monological truth into the field of an AI that must compute it. It is, moreover, more externally defensible than speaking of "the good": the alignment community finds defining good intractable but accepts that a systematically partial response about a human phenomenon is epistemologically defective.

Where the original contribution lies. The 𝓗 formula (½ coverage + ½ normalized entropy) is not mathematically novel: it combines standard metrics from information theory. The axiomatic principles reformulate dialectical contents present in the philosophical tradition (identity-alterity, holicity-partiality, etc.) and in Xirinacs. The original contribution to the AI alignment field is not the formula nor the principles but the displacement of the locus of verification: instead of pointing the quality claim to a textual constitution interpretable by the model itself (Constitutional AI) or to a PRM trained with manipulable human feedback, we point it to a ontological geometry external to the language model, computable as objective structural property. The novelty is architectural: we replace a semantic proxy vulnerable to manipulation with a topological substrate that the language model does not control — and therefore cannot easily hack. Globalistic truth as structured plurality of access modes is the philosophical language that justifies why this displacement makes epistemic sense.

4.3 Normative layer: the Xirinacsian heritage of the Good as harmony

The globalistic tradition, from Llull and Sibiuda through Xirinacs, does not rigidly separate dialectical completeness from the Good. The Xirinacsian intuition — formulated in the original Globàlium (1997) — is that the Good manifests as harmony between parts: evil is lack or excess relative to the whole; virtue lies in balance. Read in light of the hierarchy this manifest articulates, this contribution derives from dialectical completeness, it does not substitute it: globalistic truth — the dialectical integration of the phenomenon's poles — is the prior structural condition on which the tradition has built its ethical intuition of the Good.

The hierarchy matters for three reasons. (i) It is not the verifier that decides what the Good is: it computes dispersion completeness (layer 1) and justifies it as globalistic truth (layer 2); the Good as harmony appears as normative legacy of the Xirinacsian tradition, not as system output. (ii) Continuity with the tradition is preserved: the Good does not disappear from the manifest, it shifts from technical center to respected legacy. (iii) The ANA → SIN → AMO → EXP cycle preserves its meaning: the AMO phase (§4.5) orients dispersion completeness toward the common good traditionally understood as harmonic balance — not as moral computation by the system, but as direction of reasoning that leaves the final judgment to the human subject. The center of the paper is thus globalistic truth as operationalizable dispersion completeness; the Good as harmony figures as Xirinacsian inheritance.

The is/ought barrier. It is important to make explicit the separation between facts and values (David Hume, A Treatise of Human Nature, 1739): from the structural description of a response, no moral obligation derives by logical inference. The 𝓗 function does not say that a response with high 𝓗 is "morally better"; it only says it is epistemically fuller. The inference Good ⇐ harmony is a philosophical position external to the system — the Xirinacsian position, heir to the Catalan integrative tradition — that the reader may accept, refute, or qualify at their own responsibility. Arkadium does not operate on this inference: the verifier operates strictly on 𝓗 and globalistic truth (layers 1 and 2). Layer 3 — the ethical reading of harmony — appears as a cultural framework of interpretation for the human subject, not as algorithmic output. The system does not "derive the Good" from any structural property; it only measures the structural property and leaves moral reading outside its field of operation.

4.4 Six axiomatic principles

The book Globalística (in preparation) formalizes the Meta-Globàlium with six axiomatic principles derived by fusion of the dialectical poles — an expansion of the four original principles of the Globàlium (identity, alterity, holicity, universality). Summarized here:

Note on originality: the dialectical contents of these six principles (identity-alterity, holicity-partiality, etc.) are not original inventions of this work — they are reformulations of philosophical motifs present in the Xirinacsian Globàlium and, before it, in classical integrative thought (Llull, Sibiuda, Pujols, Hegel, German idealism, hermeneutics). The original contribution of the Meta-Globàlium is operational: positing these six principles as structural constraints on the output of an LLM, anchored to a computable geometry. The novelty does not lie in the principles but in how they are projected — and in how the 𝓗 verifier can use them as learning and correction signal at runtime, without requiring textual interpretation by the model itself.

- Regenerativity (PLA-MON axis, radial). Every mundane operation has a germinal origin in the plasmatic zone and a destination of fullness in the mundane zone; the cycle between potency and act is bidirectional.

- Interdependence (distinction ↔ correspondence, OBJ-SUB axis). Every part is distinguished as itself and at the same time corresponds to all others; there is neither absolute isolation nor absolute fusion.

- Plurisingularity (equality ↔ polarity, NOU-FEN axis). Deep equality at the noumenal level does not exclude manifest polarity at the phenomenal level; nor does polarity exclude a radical equality of essence.

- Totality (globality ↔ locality, TEO-PRA axis). The whole integrates the family of unifying operations (equality, similarity, correspondence, convergence) with the family of divisive operations (polarity, distinction, difference, divergence) — global reach of theory and local instantiation of practice in a single structure.

- Mutability (similarity ↔ divergence, MTF-ART diagonal). The model contains stable patterns that repeat invariantly at all scales and variants that branch permanently; stability and variability coexist in constitutive tension — mutability is the capacity to change while maintaining identity.

- Integrity (convergence ↔ difference, MTP-CIE diagonal). The whole integrates by holistic convergence of all parts into a single set, while maintaining the articulated partiality of each part — holicity at the top, partiality at the bottom.

Classical axiomatic lineage. The six principles inherit a prior dialectical formulation — that of the original Globàlium and the book Globalística in preparation — that remains valid as complementary axiomatic language. The correspondence table is:

| Principle | Axiomatic poles (classical formulation) | Essential operations (computational formulation) |

|---|---|---|

| 1. Regenerativity | potentiality ↔ authenticity | (radial PLA-MON cycle over the 8 operations) |

| 2. Interdependence | identity ↔ alterity | distinction ↔ correspondence |

| 3. Plurisingularity | uniqueness ↔ multiplicity | equality ↔ polarity |

| 4. Totality | globality ↔ locality | (macro-dialectic: 4 unifying ↔ 4 divisive) |

| 5. Mutability | stability ↔ variability | similarity ↔ divergence |

| 6. Integrity | holicity ↔ partiality | convergence ↔ difference |

The computational reformulation does not substitute the classical poles but anchors them to a univocal operational substrate: the axiomatic poles describe which ontological dialectic each principle captures; the operations describe how it manifests mathematically over the neutral cube. Four principles (1, 4 originals from the Globàlium: identity, alterity, holicity, universality) are collected here as poles of the new set of six.

This architecture anchors each principle to an essential mathematical operation over the neutral cube: 4 unifying operations (equality, similarity, correspondence, convergence) and 4 divisive operations (polarity, distinction, difference, divergence) that the principles dialectically pair. Six structural degrees of freedom — three cardinal axes, two interior diagonals, and one radial axis — on which the six principles rest. These six principles are not ornaments: they are what enables deriving the dispersion completeness function as quantification of a structural property over the ontology, not as arbitrary heuristic.

4.4.b Eight maximal dialectics

The 6 axiomatic principles structure the model. Over this structure, the neutral cube generates 8 maximal dialectics — all pairs of neutrals opposed by central inversion through the cube center: the distance-4 dialectics in Xirinacs's sense documented in Globalística. None is a new axiom; they are structural consequences of the cube that the structural verifier uses as auditing patterns. They divide into two geometric families:

4.4.b.1 Disciplinary dialectics (vertex-to-vertex)

Four diagonals connecting opposite vertices of the cube. Each pair relates two disciplines of human understanding at maximum opposition:

| Dialectic | Disciplinary poles | Classical tension | Source |

|---|---|---|---|

| LOG ↔ MIS | formality ↔ ineffability | Wittgenstein/Tractatus: what can be said vs what must be left in silence | Globalística, LOG-MIS duality |

| TEC ↔ MIT | replicability ↔ orientability | Technology requires tools of human orientation | Globalística, TEC-MIT duality |

| EST ↔ ETI | informality ↔ reciprocity | Rule-free judgment ↔ reciprocal duty (Kant: Critique of Judgment ↔ Practical Reason) | Classical tradition |

| PSI ↔ IDE | interactivity ↔ regulability | Empirical living ↔ ideal regulation | Classical tradition (Hume ↔ Plato) |

4.4.b.2 Operational and addressor dialectics (edge-to-edge)

Four diameters connecting midpoints of opposite edges through the center. Each pair relates two cognitive or semiotic capacities at maximum opposition:

| Dialectic | Operational/addressor poles | Classical tension | Family |

|---|---|---|---|

| ANA ↔ AMO | divisibility ↔ synchronicity | Analytical logos ↔ unitive eros (mental separation ↔ affective union) | Operational (method) |

| SIN ↔ EXP | integrality ↔ applicability | Theoria ↔ praxis (conceptual synthesis ↔ practical implementation) | Operational (method) |

| STT ↔ SGE | interpretability ↔ representability | Hermeneutics ↔ semiosis (receiving meaning ↔ producing signs) | Addressor (semiotic) |

| STM ↔ SGT | sensibility ↔ referentiality | Phenomenology ↔ semiotics (immediate presence ↔ mediated reference) | Addressor (semiotic) |

Each dialectic connects two points opposite by central inversion — Cartesian coordinates symmetrically negative. An agent response is considered biased when it concentrates on a single pole of these 8 diagonals without ever pointing to the opposite pole: an all-technical response (TEC) without any narrative orientation (MIT), all-analytical (ANA) without any synchronizing love (AMO), or all-interpretive (STT) without representational capacity (SGE). The structural verifier penalizes these biases as a complement to the dispersion completeness function: globalistic truth requires not only touching dialectical poles of the 6 principles, but also not concentrating on a single pole of any of the 8 maximal dialectics.

In sum: 6 axiomatic principles (3 cardinal axes + 2 face diagonals + 1 radial axis) + 8 maximal dialectics (4 vertex-to-vertex diagonals + 4 edge-to-edge diameters) = 14 structural elements that exhaustively cover the classes of geometric relations of the neutral cube.

4.4.c Systematic canonical correspondences

Beyond the 14 structural elements, the neutral cube reveals systematic correspondences with canonical formal systems. Each of the 8 vertices has an associated mathematical operation, Boolean logic gate, and set-theoretic operation:

| NEU | Math operation | Logic gate | Set-theoretic operation |

|---|---|---|---|

| NOU | equality (=) | BUFFER | Empty set ∅ + universal U |

| OBJ | distinction | NOT | Partition |

| MTP | convergence (∩) | AND | Power set P(A) |

| SUB | correspondence (↔) | OR | Cartesian product A×B |

| MTF | similarity (~) | XNOR | Inclusion A⊆B |

| CIE | difference (−) | XOR | Set difference A−B |

| ART | divergence (∇⋅) | NAND (universal) | Symmetric difference AΔB |

| FEN | polarity (±) | NOR (universal) | (under exploration) |

NAND (ART) and NOR (FEN) are the universal Boolean logic gates — any function can be constructed using only one of them. This grants these two operations a special generative status within the neutral cube.

System of causes (Aristotle ↔ Globàlium)

Aristotelian causes map systematically to cube positions: material/formal/final cause → OBJ; efficient cause → SUB; exemplary cause → DIV (worldly MIT); contextual cause → FEN; essential cause → ORG (plasmatic MTF). This allows the verifier to measure causal coverage: an explanation that touches only material/formal cause without final, efficient, or contextual cause is marked as causally incomplete — analog of dispersion completeness applied to the Aristotelian plane.

Three types of inference ↔ NEUs

- Deduction (general → particular): LOG (Formality), ANA (Divisibility)

- Induction (particular → general): IDE (Regulability), SIN (Integrality)

- Selective abduction (to the best explanation): EST (Qualitability)

- Creative abduction (generation of new hypotheses): MIT (Orientability)

The verifier can measure epistemological coverage: a purely deductive response without induction or abduction is epistemologically partial.

Alchemical macro-dialectic Solve-Coagula

Processual complement to the 6 axiomatic principles:

- Solve-side (dissolve/differentiate): ANA + CIE

- Coagula-side (coagulate/integrate): SIN + MTP

This macro-dialectic connects the processual dimension of the Method (ANA-SIN) with the operational dimension of face operations (CIE-MTP), forming a complete alchemical cycle of conceptual transformation.

For full canonical development — including 4 communication levels, radial temporal axis, canonical citations, and structural numerology — see the document docs/canonical-mappings.md in the repository.

4.5 The inference cycle FEN → ANA → TEO → SIN → NOU → AMO → PRA → EXP

The Global Method is the auditable inference architecture that the Meta-Globàlium proposes, derived from the volta of application of the model (named method volta in Globalística) — one of the six great circles that traverse the hypersphere. The simplified form (ANA → SIN → AMO → EXP) is pedagogically useful, but the complete form has eight stations that alternate ontological poles (FEN, TEO, NOU, PRA — the four cardinal poles of axes NOU-FEN and TEO-PRA) and method phases (ANA, SIN, AMO, EXP). Each method phase is, properly speaking, a transition between two ontological states:

| Station | Type | Function |

|---|---|---|

| 1. FEN | ontological pole | Starting point: phenomenological capture, raw data, concrete observations |

| 2. ANA (analysis) | method phase (FEN → TEO) | Decomposition of phenomena into analyzable elements; identification of relevant axes |

| 3. TEO | ontological pole | Theoretical state: emergent conceptual framework, formulated hypothesis |

| 4. SIN (synthesis) | method phase (TEO → NOU) | Theoretical integration pointing to the essence; consultation of neighboring and opposing categories |

| 5. NOU | ontological pole | Noumenal state: contact with deep essence, orientation toward the common good understood as harmonic balance |

| 6. AMO (love) | method phase (NOU → PRA) | Loving projection of essence toward action; transcendent application |

| 7. PRA | ontological pole | Practical state: concrete, measurable implementation, submitted to the structural verifier |

| 8. EXP (experience) | method phase (PRA → FEN) | Experience harvested that returns to new phenomena, closing the cycle |

The four method phases are not abstract operators: they are transitions between cardinal coordinates of the model. ANA leads from phenomenon to theory, SIN leads from theory to noumenon, AMO leads from noumenon to practice, EXP leads from practice to a new phenomenon. The short form ANA → SIN → AMO → EXP is correct as a list of operations, but hides the four intermediate ontological states that connect the phases — the complete reading requires the eight stations.

This pipeline transforms an AI architecture into an auditable sequence: each station leaves a trace projectable onto known Cartesian coordinates (FEN, TEO, NOU, PRA as states; ANA, SIN, AMO, EXP as operations). It replaces the opacity of free chain-of-thought with a canonical traversal of eight milestones. It is, moreover, the inference architecture that Arkadium executes step by step (see §9).

The cycle is not linear but recursive: each method phase can internally execute the complete eight-station cycle over its own subtask. The ANA of a complex problem may require an entire sub-volta (sub-FEN → sub-ANA → sub-TEO → sub-SIN → sub-NOU → sub-AMO → sub-PRA → sub-EXP) before returning to the TEO of the parent cycle. This recursivity is what enables operating over problems of any degree of arbitrariness: the Global Method is fractal in its functioning, just as the Meta-Globàlium is fractal in its structure — each category potentially contains the entire model within itself, as the principle of Integrity (convergence ↔ difference) already anticipates in §4.4.

4.5.c Solve-Coagula iteration of the Method (meta-operation)

Beyond the fractal recursivity of §4.5, the Method operates under an iterative meta-operation called Solve-Coagula (from the alchemical aphorism solve et coagula, Basil Valentine, 15th century). This meta-operation is not a new structural element — the 14 elements of the neutral cube (6 principles + 8 dialectics from §4.4) describe how the cube is; Solve-Coagula describes how it is used dynamically.

| Family | NEUs | Function |

|---|---|---|

| Solve (dissolve/differentiate) | ANA (Divisibility) + CIE (Partiality) | Decompose, criticize, distinguish |

| Coagula (coagulate/integrate) | SIN (Integrality) + MTP (Holicity) | Synthesize, totalize, unify |

The agent grounded in the Meta-Globàlium applies Solve-Coagula as recursive iteration over its own responses:

- Iteration 0: Question → initial response (executing the Method cycle)

- Iteration 1: Solve the initial response (critical analysis, gap identification) → Coagula improved response

- Iteration N: until convergence (stable 𝓗) or threshold (𝓗 ≥ 0.85)

At each iteration, the verifier measures solve_coagula_balance ∈ [0,1] (1.0 = perfectly balanced ANA+CIE with SIN+MTP). An all-Solve response without Coagula is analytically fragmented; an all-Coagula response without Solve is dogmatic.

This meta-operation formalizes Arkadium's runtime re-prompt loop: what the system already does empirically becomes inscribed in the model as a canonical operation.

Directional applicability: Solve-Coagula IS literally the FEN→NOU movement (essentialization — extracting essence from phenomenon). Therefore it applies only to voltes that essentialize:

- ✅ Volta of Application (FEN→ANA→TEO→SIN→NOU→...) — ANA+SIN constitute direct Solve-Coagula

- ✅ Volta of Knowledge (FEN→ART→SUB→MTP→NOU→...) — traverses the 4 face operations that essentialize

- ❌ Volta of Orientation (PRA→STM→SUB→STT→TEO→...) — lateral cycle without FEN-NOU traversal; Solve-Coagula inapplicable

- ❌ Volta of Relation (PLA→CNF→NOU→CMN→MON→FEN→...) — traverses NOU and FEN through the radial PLA-MON axis but in NOU→FEN direction (self-localization, not essentialization); Solve-Coagula inapplicable

Therefore the verifier only measures solve_coagula_balance when the response includes indications of essentialization (cites ANA, CIE, SIN, or MTP). For orientation questions (lateral: "what do I feel?", "what do I commit to?") and relation questions (radial: "where am I?", "what is the meaning of this?"), the metric does not apply — these traverse axes other than FEN→NOU.

4.5.b Three voltes of the Global Method

The cycle FEN → ANA → TEO → SIN → NOU → AMO → PRA → EXP exposed in §4.5 is the Volta of Application, one of the three basic voltes of the Meta-Globàlium. The book Globalística documents 6 voltes in total — six great circles that traverse the hypersphere — three basic voltes that cycle through the 8 cardinals without traversing PLA/MON, and three radial voltes that traverse the PLA-MON axis (tempeternal). Arkadium's structural verifier can select the volta appropriate to the type of question instead of always applying the application volta. The three basic voltes (using the nomenclature of the Globàlium small manual; corresponding names in Globalística noted where they differ) are:

Volta of Application (central meridian) — to think and do well

Cycle: FEN → ANA → TEO → SIN → NOU → AMO → PRA → EXP → FEN

Method operations: ANA → SIN → AMO → EXP. Suitable for: analysis, reasoning, problem-solving, planning. The canonical form for questions like "How to analyze X?", "What should be done in this case?", or "What is the best method for Y?". Detailed in §4.5. Stages (per the small manual): KNOW THYSELF (FEN→TEO) → ACCEPT THYSELF (TEO→NOU) → SURPASS THYSELF (NOU→PRA) → LIBERATE THYSELF (PRA→FEN). Named Volta of Method in Globalística.

Volta of Orientation (lateral meridian) — to find direction and meaning

Cycle: PRA → STM → SUB → STT → TEO → SGT → OBJ → SGE → PRA

Method operations: STM → STT → SGT → SGE (the four addressors: feeling / sense / signified / sign). Traverses the lateral meridian connecting the four cardinal poles SUB-OBJ-TEO-PRA via the four signaling categories. Suitable for: orientation, alignment, conflict resolution, finding personal direction and meaning. The canonical form for questions like "What do I feel?", "What do I want?", "What do I commit to?", "What conditions do I need?". Stages (per the small manual): DESIRES → ASPIRATIONS → COMMITMENTS → CONDITIONS. Named Volta of Revelation in Globalística, where it is described as resolving conflicts and revealing versions of reality through the four addressors.

Volta of Knowledge (equator) — to acquire knowledge and self-knowledge

Cycle: FEN → ART → SUB → MTP → NOU → MTF → OBJ → CIE → FEN

Method operations: ART → MTP → MTF → CIE. Traverses the horizontal SUB-OBJ axis crossing the four disciplinary octants MTP-MTF-CIE-ART. Suitable for: learning, research, exploration, self-knowledge, criterion formation. The canonical form for questions like "What do I know about X?", "Who am I in relation to X?", or "What do science, art, spirituality, and philosophy contribute to X?".

The 4 disciplinary stations are the four complementary modes of knowing:

- MTP (metapsychics): spiritual self-knowledge

- MTF (metaphysics): philosophical knowledge

- CIE (science): measurable objective knowledge

- ART (art): aesthetic self-knowledge

Stages (per Globalística): LET GO (FEN→SUB) → MELT (SUB→NOU) → LET BE INSPIRED (NOU→OBJ) → LET BE ENACTED (OBJ→FEN). Generates fullness through integration of the four modes of knowledge (none sufficient alone).

Volta selection by question type

| If the question is... | Appropriate volta | Topology |

|---|---|---|

| How to do X / What to think about X | Application | central meridian (NOU-FEN × TEO-PRA) |

| What do I feel / commit to / what direction for me | Orientation | lateral meridian (SUB-OBJ × TEO-PRA via addressors STM/STT/SGT/SGE) |

| What do I know about X / Who am I in relation to X | Knowledge | equator (SUB-OBJ × MTP-CIE) |

The Arkadium agent may, in an evolved version, detect the type of question and activate the corresponding volta — the structural verifier then evaluates coverage over the 8 stations of the selected volta, not always over the 8 of the application volta. The current implementation of Arkadium (see §9) executes only the Volta of Application; the others are referenced as roadmap.

The other three voltes (PLA-MON traversing)

The book Globalística documents three additional voltes that traverse the radial PLA-MON axis (the tempeternal dimension). More specialized, not expanded here, referenced for completeness:

- Volta of Universal (PLA-MON × TEO-PRA, categories CAS-COS-COV-CAV): synthesizing complete reality, cosmovision — chaotic / cosmic / cosmovision / chaovision modes

- Volta of Relation (PLA-MON × NOU-FEN, categories CNF-CMN-EXC-ATZ): situating oneself with respect to reality — null (CNF, withdrawal) → total (CMN, universal connection) → precise (EXC, careful distinction) → confused (ATZ, exposure to contingency)

- Volta of Consistency (PLA-MON × SUB-OBJ, categories FEL-INT-AFI-BOS): quality, bond, consistency-personality — happy / intentional / bonded / fused modes

Total: 6 voltes that exhaustively cover the great functional circles of the Meta-Globàlium. The complete Global Method documented by Berenguer in Globalística contemplates "the passage through all the great-circle voltes of the model" — Arkadium's architecture is, in this sense, a canonical simplified version that focuses on the application volta and opens the way to the other five.

5. Canonical ontological directions: a contribution to portable audit

From post-hoc bottom-up interpretability to an external canonical top-down basis.

Main contribution of this section. We propose the Meta-Globàlium as an external, a priori, model-agnostic canonical basis of interpretable directions onto which any AI model can be projected, compared, and audited. We name this contribution an ontology of directions for portable audit of activation steering — a layer of top-down interpretability genuinely external to the model, not empirically discovered from its internals, but derived from a reflective synthesis of human judgment. What the field currently calls "top-down" is, methodologically, bottom-up in disguise: directions are extracted from the model itself via contrastive prompts (RepE) or activation decomposition (SAE). What we propose here is full top-down: directions are given before looking at any concrete model, derived from an ontological cartography of human judgment — the Meta-Globàlium — and applicable transversally to any architecture.

This contribution positions itself in relation to four live traditions of interpretability and alignment:

| Approach | Origin of directions | Portability | Granularity | Nature |

|---|---|---|---|---|

| Sparse Autoencoders (Anthropic) | empirical, decomposition of internal activations | none (weight-specific) | feature-level (~10⁶) | empirical bottom-up |

| Probing classics (Tenney et al.) | empirical, supervised classifiers | none (task-specific) | task-level | supervised bottom-up |

| Representation Engineering (Zou et al. 2023) | empirical, Linear Artificial Tomography over contrastive prompts | limited (per concept) | concept-level (~10¹) | top-down by name, bottom-up by method |

| Constitutional AI (Bai et al. 2022) | textual, principle lists interpreted by the model | partial (manipulable text) | textual-normative | textual top-down |

| Meta-Globàlium (proposed) | a priori, ontological, philosophically grounded | full (model-agnostic) | 80 dir. + 8 quadrants + 26 NEU | ontological top-down |

The mechanistic interpretability agenda — sparse autoencoders, circuit identification, monosemantic decomposition — has delivered significant advances: recent studies have catalogued millions of discrete features within models like Claude. But the practical ceiling is visible: decoding a complete reasoning — not an isolated feature — remains unfeasible at industrial scale. Turpin et al. (2023) demonstrated that chain-of-thought explanations are not faithful to the model's internal computation. The field's growing conclusion is that bottom-up interpretability must be complemented by top-down interpretability (Zou et al. 2023): forcing the model to operate on interpretable primitives known from the start, rather than attempting to discover them post hoc.

The work of Zou et al. (2023) on representation engineering (RepE) offers the technical foundation for intervening in the model: concepts such as truthfulness, danger, positive affect, or intentionality are encoded as linear directions within the latent space, monitorable and intervenable through projection and vector-sum operations — activation steering. RepE shows that a limited number of canonical directions can capture behavioral dimensions that previously seemed irreducibly distributed. What is missing in this approach to be fully operative in human domains is a canonical, structurally complete, a priori-grounded set of directions onto which to project and audit. RepE provides the how; the Meta-Globàlium provides the which.

The operative idea: a model's response is projected onto each of the 80 canonical directions to produce an interpretable activation vector; directions with high projection constitute the touched categories of the response; the structural verifier computes 𝓗(r) over this vector. The response thus becomes auditable in a known space, not in an opaque latent space. Arkadium implements this idea in its starting form — categories are detected in the model's output text and mapped to quadrants — and opens the way to a more mature form, with embeddings anchored directly to the ontological poles, as the next step (§11.2).

Complementarity, not rivalry, with mechanistic interpretability. Anthropic's sparse autoencoders discover features post hoc — specific to a model and a concrete weight version — and produce catalogs without fixed semantic labels. The Meta-Globàlium can serve, reciprocally, as a canonical ontological label set for these discovered features: each feature can be projected onto the 80 directions and thus receive a globalistic signature — a stable ontological description, comparable across models and across versions. The two approaches are complementary and conceptually composable: bottom-up discovers what is inside the model; ontological top-down provides an external shared vocabulary for naming it. Our thesis is that integrating these two layers — empirical discovery + external canonical basis — is the most fertile path toward an interpretability that is both rigorous and communicable.

From this derive three practical implications that none of the individual approaches offers separately: (i) interpretability becomes portable across models — the same set of canonical directions can evaluate Claude, GPT-4, Llama, Mistral, Gemini —, providing a tool for cross-model comparison that does not exist in stable form today; (ii) regulators have a stable framework to define AI audits independent of provider and model generation; (iii) citizens obtain a shared ontological vocabulary to communicate with AI systems — the axes are reflective polarities (subject/object, theory/practice, phenomenon/noumenon, plasma/world) recognizable by any reflective human, not internal mathematical jargon of the model.

5.bis Escope: a user-facing control surface for the PLA-MON register

Architectural answer to the second Goodhart documented in §3.bis.

The second Goodhart of §3.bis — 𝓦-optimization that shifts text toward the form of dialectic and evacuates substance — cannot be solved within the metric itself. A control surface outside the metric is needed, allowing the speaker to move among three viable registers, aligned with one of the canonical Meta-Globàlium directions: the radial PLA-MON axis (radical fold — mature unfolding). This control surface is the escope, a user-facing parameter implemented in the Phase 1.5 deployment (2026-05-08).

Escope operates on a discrete radial scale of three modes:

- escope = −1 (general / PLA-leaning). Response in accessible prose, with no cardinal codes visible in the final text. Dialectic lives in syntax, not in acronyms. Privileges connotative description, smooth tempeternal rhythm, lived examples. Appropriate when the response is consumed as text by non-specialists.

- escope = 0 (balanced). Equilibrium between visible dialectical structure and readable prose. Codes can appear when they make the reasoning clearer, not as decorative tags. Appropriate for most open human questions.

- escope = +1 (focal / MON-leaning). Specific, concrete response, anchored to cases, authors, data from the exact domain. Every general claim needs anchoring. Appropriate when the question demands operational decision or explicit empirical reference.

Modulation in four layers. Escope is not a tone added post-hoc but a structural operation over the generation-and-verification pipeline:

- System prompt: a mode-specific modifier (general / focal) is appended to the base system prompt; the balanced mode appends no modifier.

- Generation:

max_tokensadjusted per mode (2048 to 3072). - 𝓦 thresholds: the dialectical core (

pair,tension,syn_anchor) stays invariant at 0.50 — dialectic is always required. Form requirements scale with escope:Component general (−1) balanced (0) focal (+1) wisdom_floor0.60 0.65 0.65 w_axis_threshold0.30 0.50 0.60 w_subord_threshold0.30 0.50 0.50 factual_floor0.30 0.40 0.55 - Two-pass polish: by default in general and balanced modes, a second pass strips cardinal codes and visible scaffolding while preserving the underlying dialectic. The polish is disabled by default in focal mode — visible concreteness is the contract. Measurable consequence:

𝓦(polished) ≈ 0in general/balanced modes because polish purges the markers 𝓦 measures. The primary metric logged is the draft (structure), and the polished version is what the user sees as final text.

Empirical validation of the radial control. Three live tests (2026-05-08, one question per mode, msg ids 98 / 100 / 102 in the messages table) confirm the expected behavior: factual density scales monotonically with escope (0.015 → 0.294 → 0.500), components axis_explicit / subord_synthesis / synthesis_anchor are purged to 0 in general mode (polish effect) while dialectical_pair and tension_density are preserved around 0.75 (the underlying dialectic survives the polish). This pattern is the empirical materialization of the principle: escope moves the form of the response without damaging its internal tension.

Phase 4 — schema + dual-criterion break. The Phase 4 deployment (2026-05-08) completed the cycle by turning 𝓦 into an independent control signal: the messages table now persists wisdom_score and the 7 components as queryable columns (not only inside metrics_json); the re-prompt loop only exits on quality if simultaneously 𝓗 ≥ 0.85, 𝓦 ≥ wisdom_floor, and 𝓕 ≥ factual_floor; the textual feedback for the re-prompt is escope-aware — nudges for axis_explicit and subord_synthesis have three variants (general / balanced / focal) and a final ACTIVE REGISTER clause prevents structural fixes from dragooning the response into a mode the user did not ask for. Full specifications: docs/escope-parameter-design.md and docs/wisdom-score-design.md §6.4.

Ontological reading. Escope is not an arbitrary setting: it is the user-facing materialization of one of the eight Meta-Globàlium canonical directions (the radial axis D4, PLA-MON). This is precisely §5's promise: that canonical ontological directions become portable control surfaces — the user can move along a known axis without learning the model's internal language. Escope is, if you will, the first user-facing activation steering exposed as a semantic parameter, not as a latent vector.

6. Neuro-symbolic AI as industrial strategy

The hybrid architecture neural proposal + structural verification has ceased to be an academic niche and become a dominant industrial strategy on fronts where brute scale is not enough:

- AlphaProof / AlphaGeometry 2 (DeepMind 2024): the combination of an LLM (intuition) with a strict symbolic verifier based on Lean (rigor) achieved IMO silver-medal level in 2024, and gold with the Deep Think version of Gemini in 2025.

- GraphRAG (Edge et al. 2024, Microsoft Research): the retrieval approach based on an entity graph built by the LLM itself substantially surpasses flat vector search on global questions over extensive corpora. It acknowledges that vector semantic representation has a ceiling when the question requires global synthesis, and that explicit relational structure is needed.

- World models (Yann LeCun's V-JEPA, DeepMind's Genie): admit that purely textual token representation is insufficient for physical and temporal reasoning, and reintroduce world models as intermediate structure.

- AlphaFold and its succession: protein folding resolved not by brute force but by architecture with appropriate geometric inductive biases.